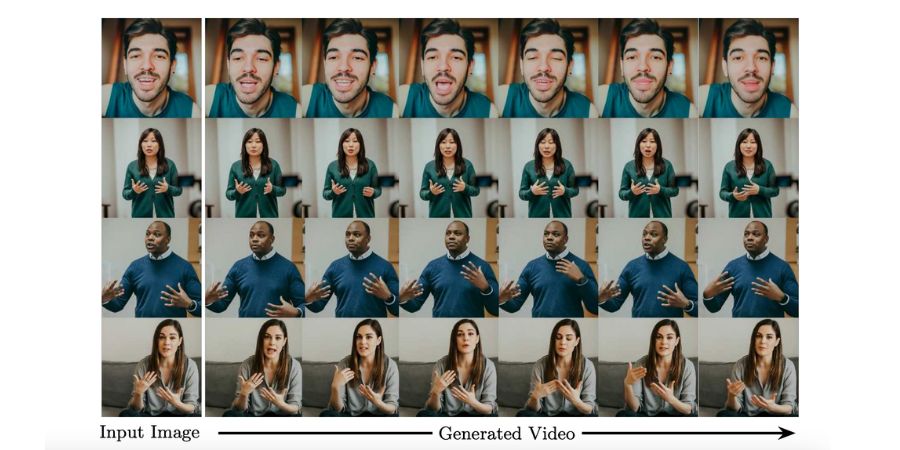

Google researchers have developed a new AI system VLOGGER that can generate realistic videos from a single still photo. VLOGGER uses advanced machine learning models to make a person speak, gesture, and move just by using a single still photo of the person.

It is a system for text and audio-driven talking human video generation from a single photo of a person. The system first takes an audio waveform to create ‘body motion controls’ for gaze, facial expression, and pose. Then it uses the ‘temporal image-to-image translation model’ to predict body controls to generate the corresponding frames.

Researchers claim that the system can also be used to make an existing video, especially in video translation. Provide the translated audio in any other language and the system will lip and face areas to be consistent with the new audio.

We have already seen several AI tools that take a photo and then convert it into a video, but these tools aren’t good. Anyone can quickly tell it’s not real. We cannot try Google VLOGGER yet, but can expect better results from it.